Posts Tagged: comments

Elon Musk will go to court over ‘pedo guy’ comments

Engadget RSS Feed

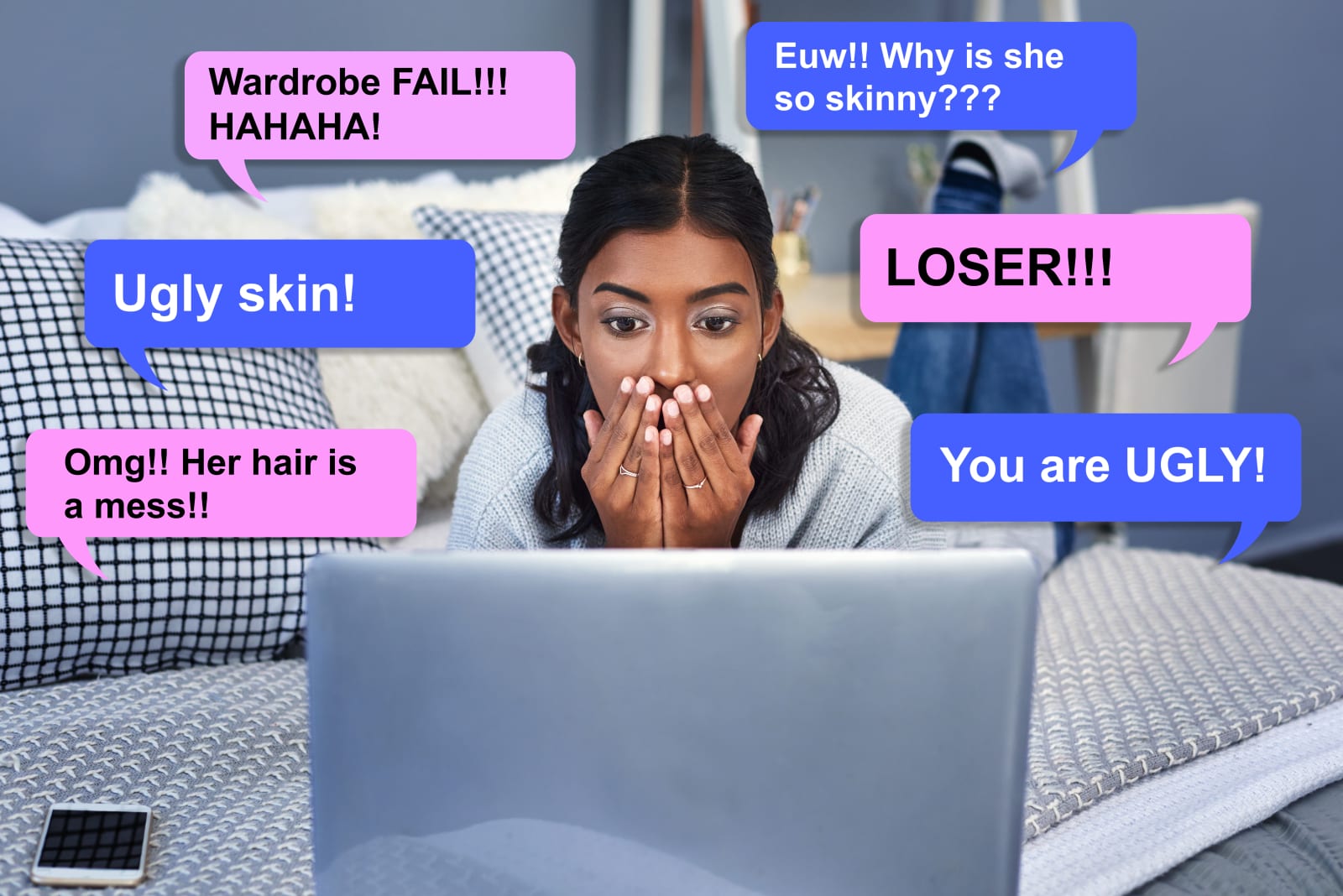

Killing comments won’t cure our toxic internet culture

Engadget RSS Feed

Faster removals and tackling comments — an update on what we’re doing to enforce YouTube’s Community Guidelines

We are committed to tackling the challenge of quickly removing content that violates our Community Guidelines and reporting on our progress. That’s why in April we launched a quarterly YouTube Community Guidelines Enforcement Report. As part of this ongoing commitment to transparency, today we’re expanding the report to include additional data like channel removals, the number of comments removed, and the policy reason why a video or channel was removed.

Focus on removing violative content before it is viewed

We previously shared how technology is helping our human review teams remove content with speed and volume that could not be achieved with people alone. Finding all violative content on YouTube is an immense challenge, but we see this as one of our core responsibilities and are focused on continuously working towards removing this content before it is widely viewed.

- From July to September 2018, we removed 7.8 million videos

- And 81% of these videos were first detected by machines

- Of those detected by machines, 74.5% had never received a single view

When we detect a video that violates our Guidelines, we remove the video and apply a strike to the channel. We terminate entire channels if they are dedicated to posting content prohibited by our Community Guidelines or contain a single egregious violation, like child sexual exploitation. The vast majority of attempted abuse comes from bad actors trying to upload spam or adult content: over 90% of the channels and over 80% of the videos that we removed in September 2018 were removed for violating our policies on spam or adult content.

Looking specifically at the most egregious, but low-volume areas, like violent extremism and child safety, our significant investment in fighting this type of content is having an impact: Well over 90% of the videos uploaded in September 2018 and removed for Violent Extremism or Child Safety had fewer than 10 views.

Each quarter we may see these numbers fluctuate, especially when our teams tighten our policies or enforcement on a certain category to remove more content. For example, over the last year we’ve strengthened our child safety enforcement, regularly consulting with experts to make sure our policies capture a broad range of content that may be harmful to children, including things like minors fighting or engaging in potentially dangerous dares. Accordingly, we saw that 10.2% of video removals were for child safety, while Child Sexual Abuse Material (CSAM) represents a fraction of a percent of the content we remove.

Making comments safer

As with videos, we use a combination of smart detection technology and human reviewers to flag, review, and remove spam, hate speech, and other abuse in comments.

We’ve also built tools that allow creators to moderate comments on their videos. For example, creators can choose to hold all comments for review, or to automatically hold comments that have links or may contain offensive content. Over one million creators now use these tools to moderate their channel’s comments.1

We’ve also been increasing our enforcement against violative comments:

- From July to September of 2018, our teams removed over 224 million comments for violating our Community Guidelines.

- The majority of removals were for spam and the total number of removals represents a fraction of the billions of comments posted on YouTube each quarter.

- As we have removed more comments, we’ve seen our comment ecosystem actually grow, not shrink. Daily users are 11% more likely to be commenters than they were last year.

We are committed to making sure that YouTube remains a vibrant community, where creativity flourishes, independent creators make their living, and people connect worldwide over shared passions and interests. That means we will be unwavering in our fight against bad actors on our platform and our efforts to remove egregious content before it is viewed. We know there is more work to do and we are continuing to invest in people and technology to remove violative content quickly. We look forward to providing you with more updates.

YouTube Team

1 Creator comment removals on their own channels are not included in our reporting as they are based on opt-in creator tools and not a review by our teams to determine a Community Guidelines violation.

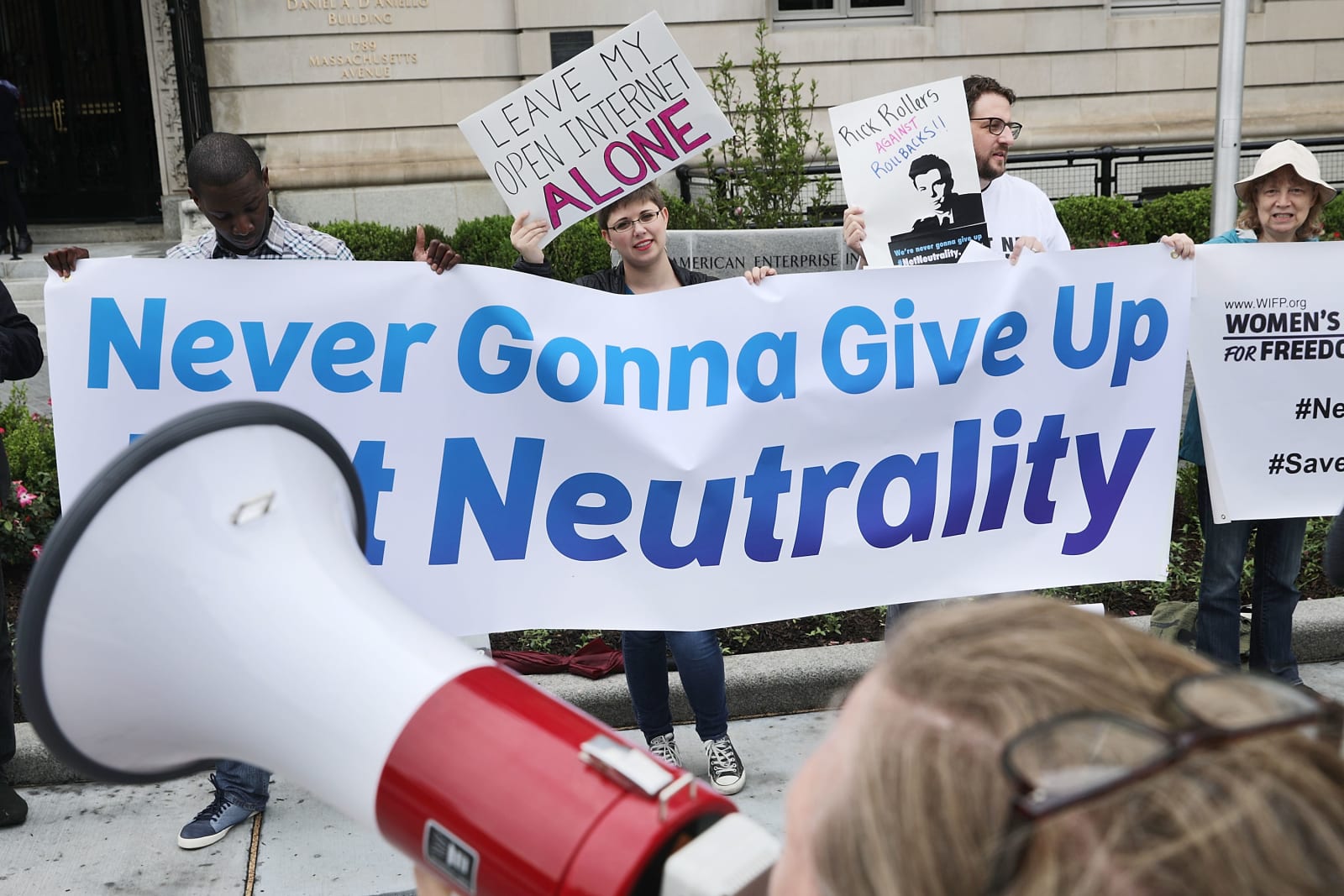

Over 1.3 million anti-net neutrality FCC comments are likely fakes

Engadget RSS Feed

Mark Zuckerberg explains post-election comments he now ‘regrets’

Last November, Mark Zuckerberg said, "Personally, I think the idea that fake news on Facebook, of which it's a very small amount of the content, influenced the election in any way is a pretty crazy idea." Nearly a year later, and after evidence has b…

Last November, Mark Zuckerberg said, "Personally, I think the idea that fake news on Facebook, of which it's a very small amount of the content, influenced the election in any way is a pretty crazy idea." Nearly a year later, and after evidence has b…

Engadget RSS Feed

Twitch comments for pre-recorded videos are like a slow chatroom

If we've learned anything from experiments like Twitch Plays Pokemon, it's that a large part of the streaming site's success lies on the enthusiasm of its community. Twitch viewers don't just watch streams, they participate by flooding their favorite…

Engadget RSS Feed

Are your Instagram comments not showing up? You’re not alone

Comments aren’t showing up for some Instagram users. Here’s everything we know about the issue and if it’s going to be fixed.

Digital Trends